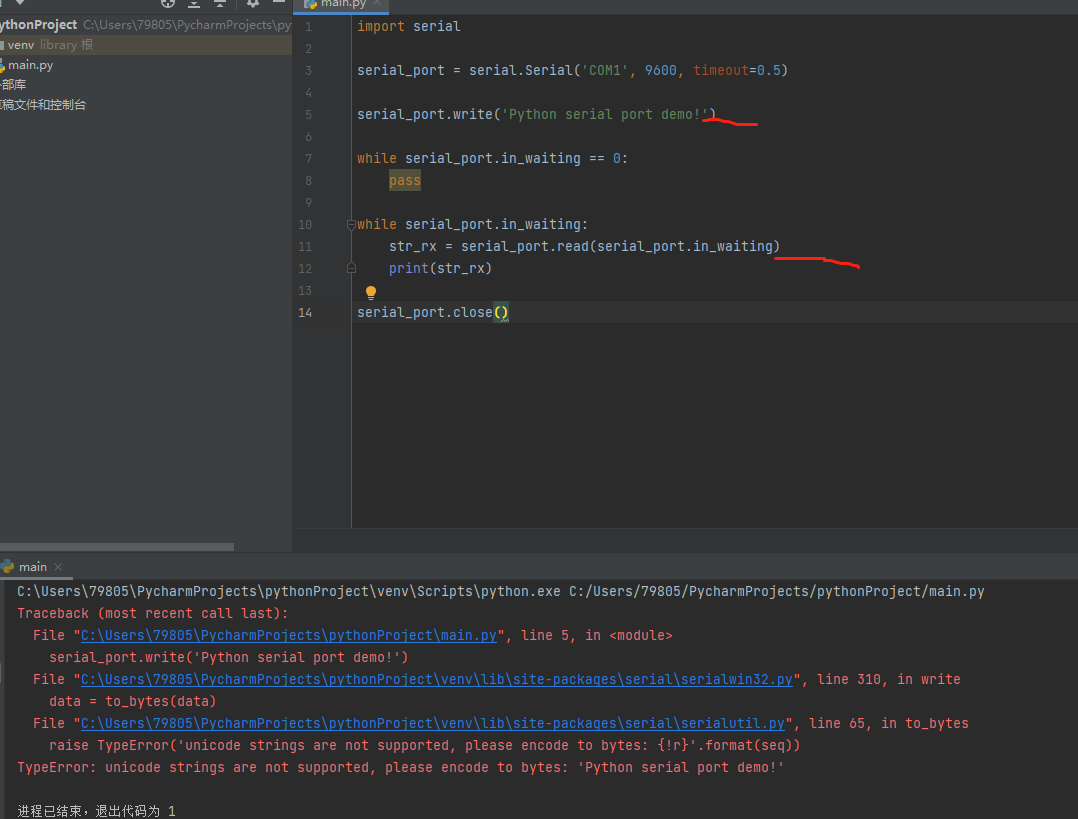

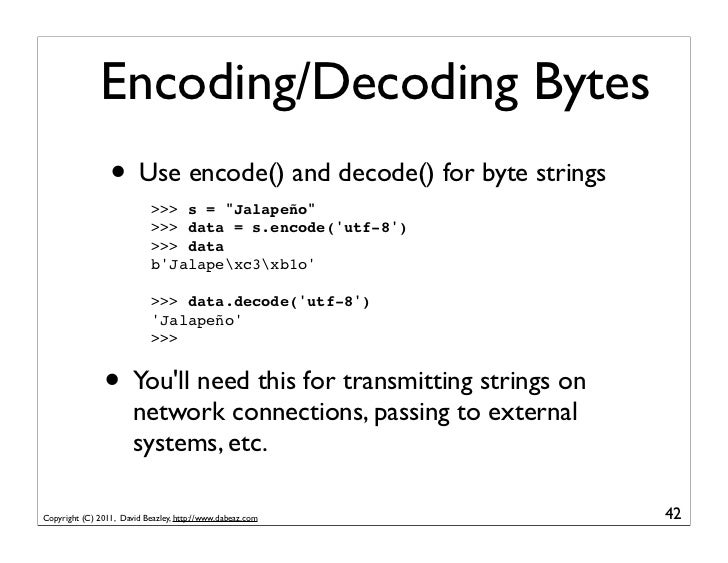

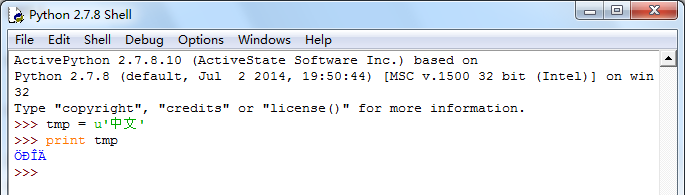

Find out the encoding of the source and use that to translate it into a unicode. So for your question, you first need to figure out what encoding your str is in.ĭid it come from a file? From a web request? From your database? Then the source determines the encoding. ( '\xa7' is the section character, in both We can work with other encodings, too, of course: > s = "I have a section \xa7" So it did, and now the heart is just a bunch of bytes it can't print as ASCII so it shows me the hexadecimal instead. I gave Python a Unicode string, and I asked it to translate the string into a sequence of bytes using the 'utf-8' encoding. Let's go the other way around: > u = u"I'm a string! Really! \u2764" What happened here? I gave Python a sequence of bytes, and then I told it, "Give me the unicode version of this, given that this sequence of bytes is in 'utf-8'." It did as I asked, and those bytes ( a heart character) are now treated as a whole, represented by their Unicode codepoint. U"I look like a string, but I'm actually a sequence of bytes. You use encode to generate a sequence of bytes ( str) from a text string ( unicode), and you use decode to get a text string ( unicode) from a sequence of bytes ( str).įor example: > s = "I look like a string, but I'm actually a sequence of bytes. So you need to be able to translate back and forth.Įnter codecs: it's the translation library between these two data types. Certainly that makes sense whenever you're streaming a series of bytes (such as to or from disk or over a web request). Some libraries give you a str, and some expect a str. The problem is that in practice, you run into both. So you only need to think about the characters themselves, and not the bytes. The point is that you know it's a sequence of bytes that are interpreted a certain way. I'm having trouble tracking down exactly what encoding Python uses internally for sure, but it doesn't really matter just for this. The answer is to, at least within memory, have a standard encoding for all strings. Naturally, we're all aware of the mess that created. If you don't know what this paragraph is talking about, go read Joel's The Absolute Minimum Every Software Developer Absolutely, Positively Must Know About Unicode and Character Sets right now before you go any further. How it's interpreted is up to whatever piece of code is reading it. It's just a bunch of bytes with no encoding actually attached to it. To begin with, think of str like you would a plain text file. UnicodeDecodeError: 'ascii' codec can't decode byte 0xc2 in position 0: ordinal not in range(128)Īside from getting decode and encode backwards, I think part of the answer here is actually don't use the ascii encoding.

Encoding is an operation that converts unicode to a byte string so Python helpfully attempts to convert your byte string to unicode first and, since you didn't give it an ascii string the default ascii decoder fails: > "\xc2".encode("ascii", "ignore") In the second case you do the reverse attempting to encode a byte string. UnicodeEncodeError: 'ascii' codec can't encode character u'\xa0' in position 0: ordinal not in range(128) Here's an example which shows the type of the input string: > u"\xa0".decode("ascii", "ignore") Python helpfully attempted to convert the unicode value to str using the default 'ascii' encoding but since your string contained a non-ascii character you got the error which says that Python was unable to encode a unicode value. In the first example string is of type unicode and you attempted to decode it which is an operation converting a byte string to unicode.

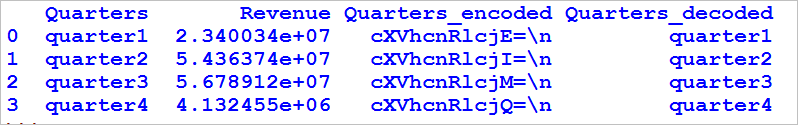

Let us look at the above concepts using a simple example.Guessing at all the things omitted from the original question, but, assuming Python 2.x the key is to read the error messages carefully: in particular where you call 'encode' but the message says 'decode' and vice versa, but also the types of the values included in the messages. Inserts a backslash escape sequence ( \uNNNN) instead of un-encodable Unicode characters. Replaces all un-encodable Unicode characters with a question mark ( ?) Ignores the un-encodable Unicode from the result. There are various types of errors, some of which are mentioned below: Type of Errorĭefault behavior which raises UnicodeDecodeError on failure. This is actually not human-readable and is only represented as the original string for readability, prefixed with a b, to denote that it is not a string, but a sequence of bytes.

This means that the string is converted to a stream of bytes, which is how it is stored on any computer. Although there is not much of a difference, you can observe that the string is prefixed with a b. NOTE: As you can observe, we have encoded the input string in the UTF-8 format. Original string: This is a simple sentence.Įncoded string: b'This is a simple sentence.'

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed